How the projects favored by DAT before the TGE will reshape the AI power landscape

Author: on-chain Highlights Source: medium

The Power Dilemma and Solutions in the Era of AI

The historical game between “openness” and “monopoly” unfolds continuously, representing the development process of the internet. The power hegemony inevitably turns towards the technology groups that control data and computing power. A similar script is currently playing out in the AI field, and the situation is even more severe. As the scale of AI model parameters expands at a remarkable speed, and as the global artificial intelligence chip computing power is dominated by the cloud services of a few companies, a profound dilemma has emerged: it is giving rise to a “digital oligopoly” more powerful than the internet era, characterized by a triple monopoly of “data-algorithm-computing power.” The massive personal data contributed by ordinary users has been reduced to “free production data” for training models in this framework, preventing them from sharing the enormous value created by their data, thus stifling innovation.

However, the development of technology is always孕育着变革的力量, and the emergence of blockchain has shown us the possibility of power decentralization. Ethereum has returned the application development rights to developers, while Bitcoin has decentralized financial power to various nodes. In a similar role in the AI field, the 0G project has emerged and is highly anticipated. By constructing a decentralized AI incentive network as the core goal of 0G, it fundamentally breaks the existing power structure. It returns the value of computing power data and models to every participant, making AI a truly inclusive public product.

Accumulating AI Infrastructure through Technological Solutions

To achieve the democratization of artificial intelligence, we need to address the fundamental pain points faced by traditional centralized infrastructures: poor flexibility, performance bottlenecks, and low storage efficiency. The development of a decentralized artificial intelligence economy is fundamentally supported by a complete set of innovative technological architectures provided by 0G.

Architecture addresses flexibility challenges

As the model upgrades from GPT-3 to GPT-4, with parameter scales increasing nearly a hundredfold, the drawbacks of the traditional AI system's “a single pull affects the whole body” architecture become apparent. Developers are helplessly forced to invest massive amounts of time and resources into restructuring and rebuilding the entire system. However, the emergence of 0G replaces the AI infrastructure for disassembly, breaking it down into modules such as model training, data processing, and computing power that can operate and upgrade independently, communicating through standardized interfaces. Developers can change a specific module as needed, similar to swapping out a phone case, rather than replacing the entire phone. This design greatly reduces upgrade costs and the overall development cycle, ensuring that the infrastructure can quickly keep pace with the evolution of AI technology, thereby reducing the negative realization of technology updates.

revolutionary breakthrough in performance

Whether it is the instant response of AI agents or the millisecond-level decision-making of autonomous driving, both impose strict requirements on the processing speed and throughput of low-level networks. To support the future large-scale real-time AI trading demands, the design goal of 0G networks is to achieve 11,000 TPS transaction processing and throughput of up to 50 GB/s. If urban traffic scheduling AI is deployed on 0G, it means it will have the capability to process thousands of real-time traffic light statuses and road conditions, making optimal decisions in an instant. Eliminating judgment biases caused by network latency. The key to ensuring that AI applications transition from the laboratory to real life lies in this powerful performance guarantee.

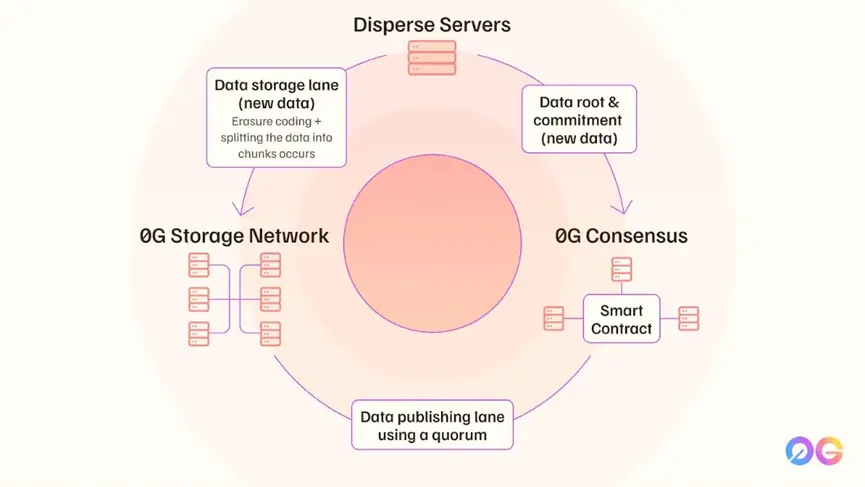

Error correction code designed infinite sharding architecture

The 0G mainnet adopts an innovative architecture with erasure coding design and systematic artifact processing, upgrading the traditional hot and cold data layering model. For example, an original data block can be split into over 3000 encrypted shards, achieving the best balance of data availability and storage efficiency through dynamic coding algorithms. Under ideal conditions, the entire network can achieve a write capacity of N×35MB/s at N nodes, which is made possible by storing a unique shard copy in the storage nodes and utilizing a random sampling verification mechanism, allowing the number of load nodes across the network to scale linearly. In this way, a revolutionary global storage technology reconstructs the AI data management paradigm.

The breakthroughs in core technology are reflected in the following three aspects:

-

Data path decoupling: Completely separate the “storage verification channel” from the “data publication channel,” achieving consensus solely through aggregate signatures and KZG commitments, addressing the broadcasting bottleneck present in traditional blockchains.

-

Layered Storage Layer: The computing/storage components support independent deployment, allowing for combination based on demand to achieve good results. When training models with hundreds of billions of parameters, the dataset can be hashed to the L1 layer and processed through sharded data with the help of cyclic nodes.

-

Unlimited sharding expansion: Theoretically supports EB-level data storage needs and can perfectly equip AI massive data throughput scenarios. With the help of a dynamic re-staking mechanism, horizontal scaling of the decision layer can be achieved without interrupting services.

The architecture compresses data access latency to the microsecond level, reducing storage costs by 50% and initially supporting multi-formula calculations. When handling complex tasks like medical image analysis, the system can automatically schedule near-field sharding to carry out real-time inference work, rather than migrating the complete dataset. This feature makes 0G the only centralized storage solution that meets the demands of AI training and real-time applications.

From technology to ecology: market validation and application landing

Whether a cutting-edge technology can gain market recognition and build a prosperous ecosystem ultimately determines its success. Through practical applications and collaboration, 0G is proving its commercial value.

In January of this year, Alibaba Cloud, a global leader in cloud computing services, announced a partnership with 0G, significantly promoting the development of next-generation Web3 and AI infrastructure in the Asia-Pacific region. This collaboration combines 0G's standardized centralized AI technology stack with Alibaba Cloud's industry-leading computing power, vast customer base, and extensive regional influence, making it strategically significant. It paves the way for traditional enterprises and developers to adopt decentralized technologies on a large scale, representing not just a technology integration, but an important bridge between the Web2 and Web3 worlds.

Specifically, the collaboration between both parties will focus on several core aspects, integrating cloud infrastructure with 0G's decentralized AI storage to support data-intensive applications, such as large-scale AI training inference and verifiable computing. Both parties will jointly initiate activities, such as workshops, hackathon mentor programs, and more to empower the next generation of developers and innovative talents in the Asia-Pacific region, inspiring them to explore the potential of the integration of AI, cloud computing, and blockchain technology.

In this process, Cloudician Technology - a professional Web3 infrastructure service provider - provides key support to jointly promote the accelerated integration of AI and Web3 in the Asia-Pacific region. This event is significant and comparable to ordinary technology; it signifies that the 0G technology architecture and ecological vision have been recognized by top cloud service providers. Subsequently, both parties are working together to create an open, composable, and innovation-driven digital future for developers and enterprises.

Publicly listed companies are willing to actively engage in comprehensive cooperation, with confidence stemming from the technological breakthroughs achieved by 0G. Recently, 0G successfully leveraged clustering to complete the training of a large model with 107 billion parameters on low-throughput internet connections, a result considered a milestone in the field of decentralized AI. Compared to the related research conducted by Google, its efficiency has improved by 357 times. The entire market's attention was drawn to this, precisely because of such technological foundations and the business collaboration model with Alibaba Cloud.

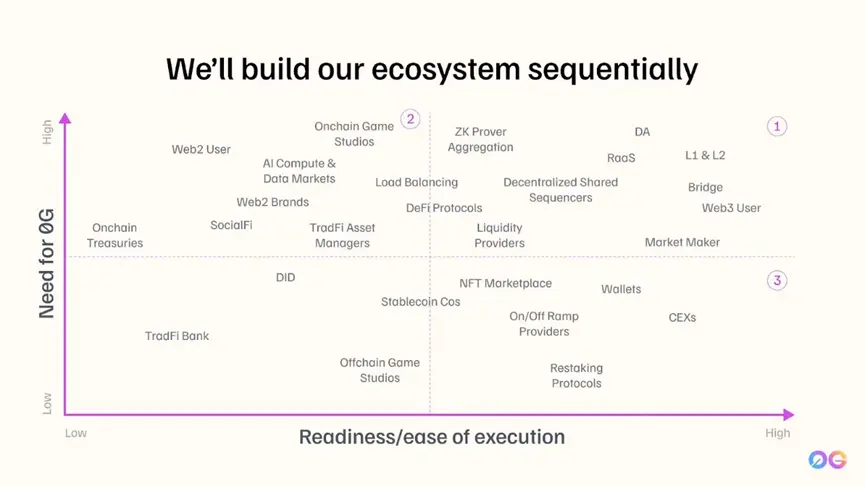

Surprisingly, the diversity and quality of exchanges among 0G ecosystem partners are equally outstanding. Within the existing ecosystem, there are not only Layer 2 technology platforms like Optimism but also industry leaders like IoTex, which is deeply rooted in the Internet of Things (DePIN) field. In the process of commercializing specific scenarios, collaboration with industry leaders can accelerate progress, while partnerships with technology platforms can strengthen their underlying capabilities. This “industry leader + technology platform” dual-line cooperation model allows 0G deployments to unleash strong ecological explosion potential.

Future Vision: When AI Innovation Brings the Public Back

0G not only achieves innovation at the technical level but also embodies the implementation of production relationships in the AI era, pushing AI innovation to truly return to the public. Developers have genuinely gained real technical autonomy due to the construction of a decentralized network, enabling them to innovate freely without being “tied down” by platforms. Ordinary users can transform their digital footprints — whether chat records, health data, or consumption habits — into authorized and tradable personal assets through the blockchain's entity rights mechanism, and every data contribution can receive fair economic returns.

The realization of “training AI models on consumer-grade devices” is the ultimate form of this vision. Imagine a high school teacher on a 0G network being able to collaborate with peers to train a personalized tutoring AI that truly understands the local students' needs, using years of accumulated teaching notes and classroom data, and relying on subsequent professional computing clusters based on price. A farmer can share data about the soil, climate, and crop growth from their farmland to collaboratively train a precision agriculture AI model suitable for local water and soil conditions, and continuously gain benefits from the application of this model.

When the creative rights of AI are no longer the exclusive patent of a few technological elites, but become tools within reach of billions of ordinary people, the relationship between humans and AI shifts from passive service to active collaboration and co-creation. In the true AI revolution, we may find that it is not about how many model parameters are added, but rather that everyone finds their unique value in the continuous growth and transformation, and shares the rewards they deserve. This is the future of technological development that is truly worth looking forward to.