Gen-2's new feature "Magic Brush Ma Liang" exploded, netizens: Urgent

Article source: qubits

Image source: Generated by Unbounded AI

Image source: Generated by Unbounded AI

AI has evolved to this extent to engage in video generation?!

Swipe at a photo to get your chosen target moving!

It’s obviously a stationary truck, but it runs up with a brush, and even the light and shadow are perfectly restored:

It’s obviously a stationary truck, but it runs up with a brush, and even the light and shadow are perfectly restored:

It was originally just a photo of a fire, but now it can make the flames soar into the sky with a swipe of your hand, and the heat is coming:

It was originally just a photo of a fire, but now it can make the flames soar into the sky with a swipe of your hand, and the heat is coming:

If you go on like this, you can’t tell the difference between photos and real videos!

If you go on like this, you can’t tell the difference between photos and real videos!

It turns out that this is a new feature created by Runway for the AI video software Gen-2, which can make the objects in the image move with one painting and brush, and the degree of realism is no less than that of the magic pen Ma Liang.

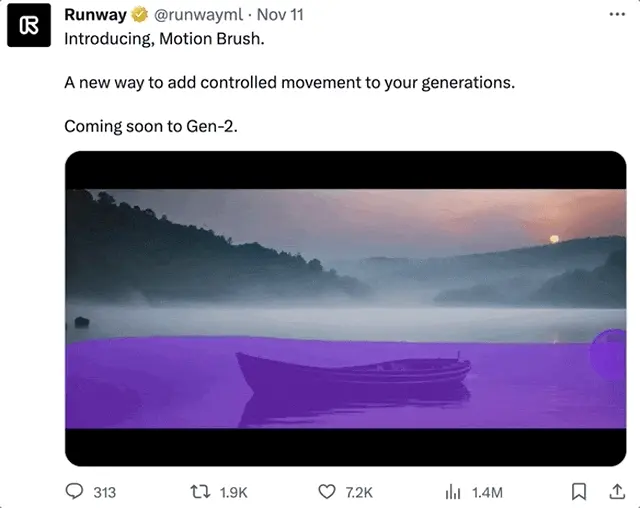

Although it is just a function warm-up, it exploded on the Internet as soon as the effect came out:

Seeing that netizens turned into anxious kings one by one, they shouted “I can’t wait to try a wave”:

Seeing that netizens turned into anxious kings one by one, they shouted “I can’t wait to try a wave”:

Runway has also released more features to warm up the effect, let’s take a look.

Runway has also released more features to warm up the effect, let’s take a look.

Photo to video, move wherever you point

This new feature on the runway is called Motion Brush.

As the name suggests, you only need to use this brush to “paint” any object in the picture to make them move.

Not only can it be a static person, but even the movement of the skirt and head is natural:

It can also be a flowing liquid such as a waterfall, and even the mist can be restored:

It can also be a flowing liquid such as a waterfall, and even the mist can be restored:

Or a cigarette that hasn’t been extinguished yet:

Or a cigarette that hasn’t been extinguished yet:

A bonfire burning in front of everyone:

A bonfire burning in front of everyone:

Larger backgrounds can also be made dynamic, even changing the light and shadow effects of the picture, such as the rapidly moving dark clouds:

Larger backgrounds can also be made dynamic, even changing the light and shadow effects of the picture, such as the rapidly moving dark clouds:

Of course, these are all Runway “bright cards” that take the initiative to tell you that they “did something” with the photos.

Of course, these are all Runway “bright cards” that take the initiative to tell you that they “did something” with the photos.

The following videos without smear marks are almost completely indistinguishable from the ingredients modified by AI:

A series of effects have exploded, which has also led to the fact that the function has not been officially released, and netizens can’t wait.

A series of effects have exploded, which has also led to the fact that the function has not been officially released, and netizens can’t wait.

A lot of people are trying to understand how this feature is implemented. There are also netizens who pay more attention to when the function will come out, and hope that the link will be directly on 321 (manual dog head)

You can indeed expect a wave.

You can indeed expect a wave.

But it’s not just this new Motion Brush feature that Runway has introduced.

The recent flurry of AI-generated advances seems to indicate that the field of video generation is really about to usher in a technological explosion.

Is AI-generated video really on the rise?

Just like in the past few days, some netizens have developed a new way to play the very popular Wensheng animation software Animatediff.

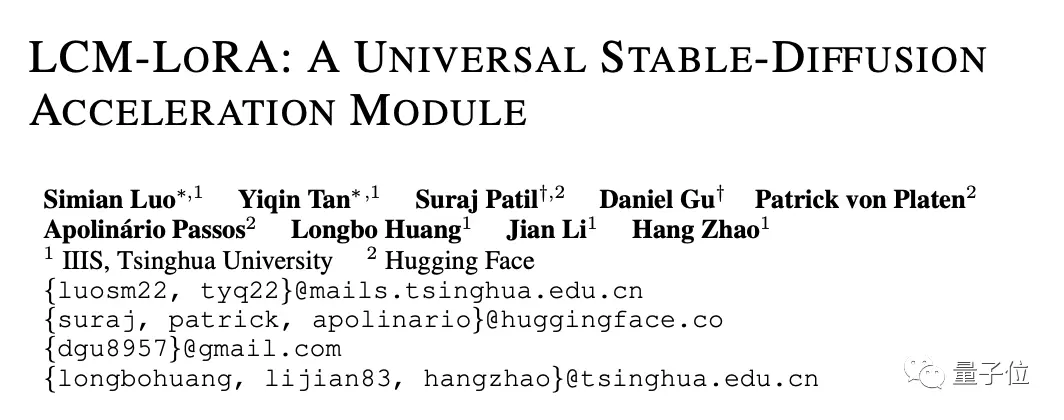

Combined with the latest research, LCM-LORA, it only takes 7 seconds to generate an animated video with 16 frames.

Combined with the latest research, LCM-LORA, it only takes 7 seconds to generate an animated video with 16 frames.

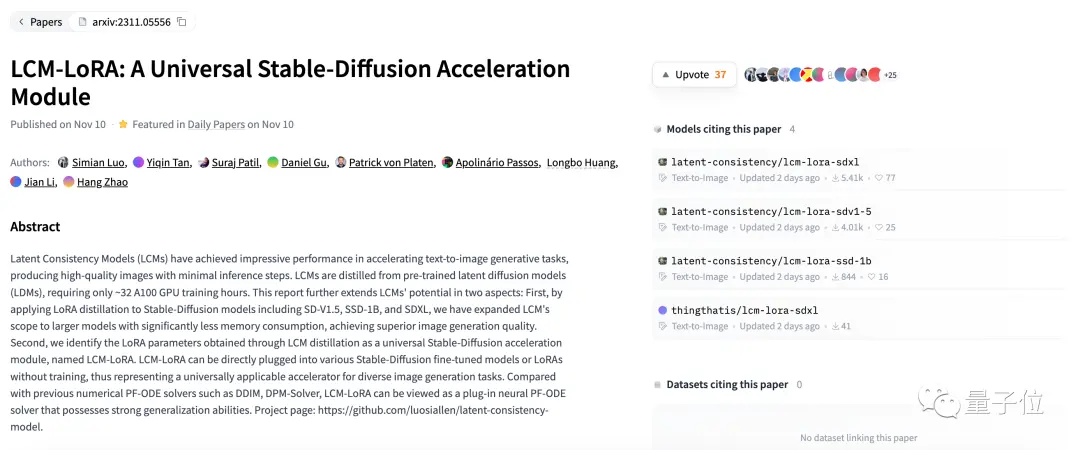

LCM-LoRa is a new AI image generation technology from Tsinghua University and Hugging Face, which can greatly improve the image generation speed of Stable Diffusion.

Among them, LCM (Latent Consistency Models) is a new image generation method based on OpenAI’s “consistency model” earlier this year, which can quickly generate 768×768 high-resolution images.

However, LCM is not compatible with existing models, so Tsinghua University and the members of Huhuyan have released a new version of the LCM-LoRa model, which can be compatible with all Stable Diffusion models and accelerate the drawing speed.

Combined with Animatediff, it only takes about 7 seconds to generate such an animation:

Combined with Animatediff, it only takes about 7 seconds to generate such an animation:

At present, LCM-LORA has been open sourced in Hug Face.

At present, LCM-LORA has been open sourced in Hug Face.

How do you feel about the recent progress in AI video generation, and how far are you from being usable?

How do you feel about the recent progress in AI video generation, and how far are you from being usable?

Reference Links:

[1]

[2]